Set point Regulation of Linear Continuous time Systems Using Neuromorphic Vision Sensors

Supplementary Material

I Introduction

Simultaneous localization and mapping (SLAM)[5] is the problem of building a map of the environment and concurrently estimating the state of the robot. Accurate and robust SLAM algorithms are the keys to unlock autonomous robotic navigation.

It is typical among popular SLAM methods to represent the state discretely at the measurement times of one of the sensors, e.g., the camera in vision-based SLAM. If the other sensor does not have measurements at the same times, techniques such as interpolation need to be employed to express error terms. |  |  |

Among the plethora of sensors providing relevant information for localization and mapping, cameras are a very convenient solution in virtue of their information-rich measurements, low cost, and low weight. Vision-based SLAM algorithms use cameras as the main, but not necessarily the only sensor modality. The most common vision-based SLAM formulation is based on the discrete-time (DT) trajectory representation[8]. Namely, the poses of the camera are estimated at the time a new measurement, i.e., an image, is available. The discrete-time formulation has the benefit of a very consolidated theory. In the past years, many successful applications have been seen[5]. Its limitations are well understood and are addressed in ongoing research.

Although cameras can be used as the only source of information in SLAM systems, fusing multiple sensor modalities is beneficial for accuracy and robustness. In discrete-time SLAM, customized algorithms are necessary to include asynchronous measurements coming from different sources in the estimation process[20]. Similarly, ad-hoc solutions are needed to avoid adding a new state to the estimation problem every time a new measurement is available[9]. In this way, high-rate sensors can be incorporated, thus limiting the increase in computational complexity.

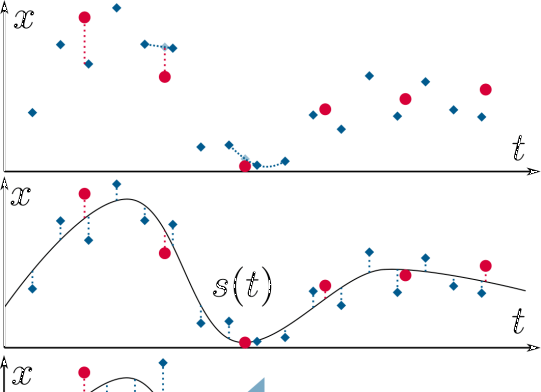

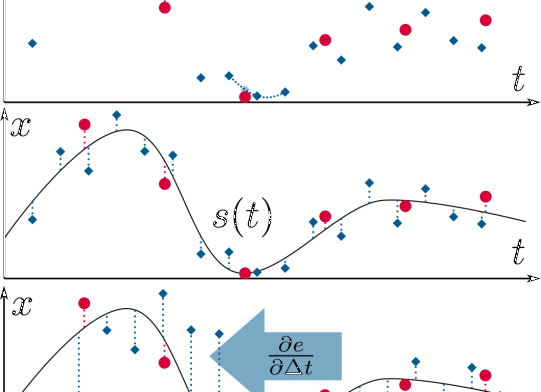

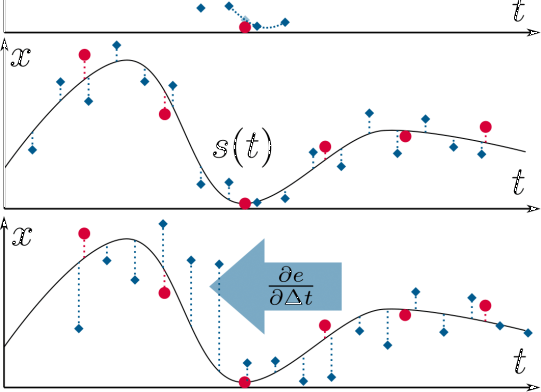

In the past years, researchers have been investigating the use of continuous-time (CT) representations to encode the camera trajectory[11, 17, 15]. Continuous-time based probabilistic SLAM formulations have been derived similarly to the discrete-time case. In particular, applications of continuous-time trajectory representations have been used for multi-sensors calibration[11, 10], planning[7], and 3D reconstruction[26, 16]. The continuous-time formulation brings several advantages to the estimation problem. Firstly, continuous-time trajectories can be sampled at any time. This makes it easy to fuse asynchronous sensors and estimate time offsets, see Fig.1. Such property is also beneficial for sensors with continuous stream of data, e.g., LiDARs, rolling shutter cameras, and event cameras. Secondly, the continuous-time formulation removes the need to include an optimization variable for every sensor measurement. The computational complexity of the optimization problem is kept bounded, allowing to easily include high-rate sensors, such as inertial measurement units (IMU), in the estimation process. However, the continuous-time representation introduces a prior on the smoothness of the trajectory. Modeling this prior such that it can generalize to different levels of the trajectory smoothness is not an easy task. For example, when using polynomial functions for the continuous-time representation, a not high enough degree of the polynomial function would lead to a loss of details in the trajectory estimates due to excessive smoothing.

To the best of our knowledge, there is no systematic comparison between the continuous- and discrete-time formulations for vision-based SLAM. Such systematic analysis is fundamental to guide the robotic practitioners in the design of future SLAM solutions. Therefore, we perform an extensive quantitative analysis to understand the respective advantages and limitations of the two trajectory representations. We focus on batch SLAM with visual, inertial, and global positional (i.e., Global Positioning System (GPS)) measurements and also investigate the contribution of each sensor modality. IMU and GPS provide local high-rate and global low-rate measurements, respectively, which are complementary to the camera measurements. Camera, IMU, and GPS measurements are fused together to obtain locally accurate and long-term drift-free trajectory estimates. We run experiments in both hardware-in-the-loop simulation and on real-world trajectories of flying and ground robots.

Our experiments indicate that discrete-time and continuous-time representations produce equivalent results when the sensors are time-synchronized. However, when there is an offset in the time synchronization, continuous-time is superior. The main reason behind this result is that the simplifying assumptions made for estimating the time offsets in discrete time do not always hold. These finding are valid for both aerial and ground robots.

To summarize, the main contributions of this work are:

-

An in-depth study on the comparison of discrete- and continuous-time trajectory representations in vision-based SLAM.

-

Extensive experimental evaluation in hardware-in-the-loop simulation and on real-world data collected from flying and ground robots.

-

A modular, efficient software architecture including state-of-the-art algorithms to solve the SLAM problem in the discrete and continuous time. We release the code fully open-source.

Ii Related Work

A popular choice for approximating continuous functions are temporal basis functions. In the set of temporal basis functions, B-splines[11, 22] have properties that are useful for trajectory representation: locality and smoothness. B-splines define the continuous time evolution by using a set of control points. Sampling at any time only depends on a local subset of the control points (locality). The size of this subset corresponds to the order of the B-spline. A B-spline of order is a function of class (smoothness). Other suitable choices of basis functions are wavelets[3]. Gaussian processes have also been used to represent continuous-time trajectories for batch state estimation problems[2].

Recent works have tried to reduce the computational complexity of sampling and computing derivatives from B-splines. In[22], a novel derivation for the computation of the time derivatives of cumulative B-spline is proposed. This approach reduces the computational complexity from quadratic to linear in the order of the spline. A novel efficient non-uniform split interpolation for cumulative B-splines is proposed in[13].

The most related applications of continuous-time trajectory representation to our work are multi-sensor calibration[11, 10], 3D reconstruction[17, 26], visual-inertial odometry for event cameras[15], and batch state estimation[2].

The literature on the classical discrete-time SLAM formulation is very broad. Due to space constraints, we refer the reader to the survey paper[5] for an overview on the discrete SLAM formulation. Differently from continuous time, specific algorithms are needed to estimate the time offsets between different sensors. In our discrete-time implementation, we use the method proposed in[20] to estimate the camera-IMU time offset. A similar approach to the one in[14] is used for the GPS-IMU time offset estimation. We describe these two methods in more details in Sec.III-B.

|  |

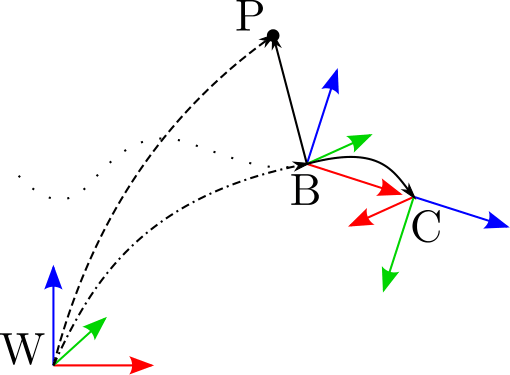

| (a) Estimation flowchart | (b) Reference frames |

(b) The frames and the positions involved in the estimation process. Solid black lines indicate optimizable, calibrated transformations, the dashed lines the position measurements, and the dashed-dotted lines the time-varying, estimated transformation. The dotted lines indicate the trajectory of the latter. Red, green and blue arrows indicate x-, y- and z-axes of the frames.

Iii Methodology

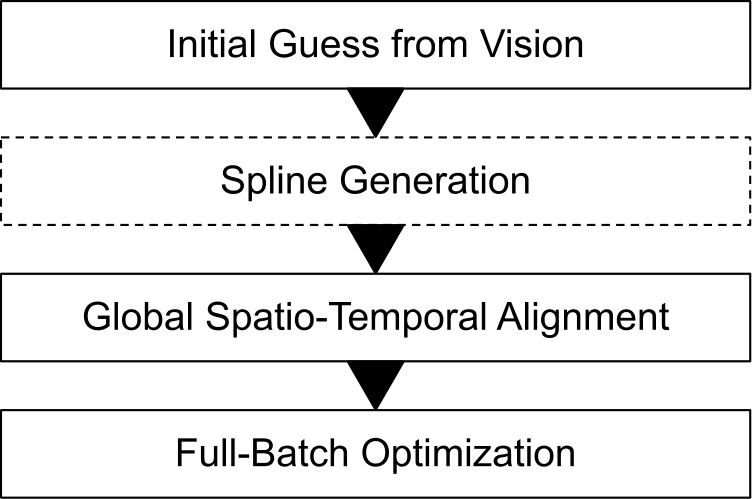

We solve the estimation problem using a multi-step approach that involves a few initialization steps before the full-batch optimization. Fig.2 (a) shows the flow chart of the optimization pipeline for the both continuous- and discrete-time approaches. The reference frames are depicted in Fig.2 (b). is the fixed world frame, whose axis is aligned with the gravity. When available, GPS measurements are expressed in this frame. is the moving body frame. We set it equal to the IMU frame. is the camera frame. is the GPS antenna position. Note that this is not a full 6-DOF frame, as no orientation is provided by the GPS measurement. We use the notation to represent a quantity in the world frame . Similar notation applies for each reference frame. The position, orientation, and velocity of with respect to at time are written as , part of the 3-D rotation group , and , respectively. We use 4 4 matrices, (the special Euclidean group) to express 6-DOF Euclidean transformations.

GPS measurements consist in the position of the GPS antenna in the world frame, . The position of the -th 3-D visual landmark in the world frame is . The set contains the 3-D visual landmarks. The time is the time offset between camera and IMU such that . Using the same convention, is the GPS-IMU time offset.

The state vectors containing the optimization variables are

and , for the continuous- and discrete-time approaches, respectively. They are introduced in Sec.III-A and Sec.III-B. The transformation is the extrinsic calibration matrix between camera and IMU. The vector is the position of the GPS antenna in the body frame. We use different representations for the IMU biases in continuous time, , and discrete time, . They are introduced in Sec.III-A and Sec.III-B, respectively.

In this work, we focus on global shutter cameras, which are the common camera choice in vision-based SLAM systems. The initial camera poses and 3-D landmarks are obtained by using COLMAP[21]

. COLMAP is a monocular Structure-from-Motion pipeline which is widely used in the computer vision and robotic community. The camera poses and 3-D landmarks reconstructed by COLMAP are expressed in the scaleless reference frame

, which is a fixed frame defined such that the first camera pose is equal to the identity. We use the notation to denote a sensor measurement and the notation to denote a ground-truth measurement.

Iii-a Continuous-time representation

We use cumulative B-splines for the continuous-time trajectory representation. A uniform B-spline of order and control nodes is defined by

where is the time variable. The control points are equally spaced in time by an interval , , and are given by the De Boor-Cox recurrence relations[6]. For cumulative B-splines, Eq. (1) can be rewritten as

with , and . The coefficients of a uniform B-spline are constant and can be written in matrix form[18]. This matrix form can be also used for cumulative B-splines. Combining the matrix formulation[18] with the uniform representation proposed in[22], the calculations can be simplified and the equation for sampling from the B-spline at time becomes

The uniform time representation proposed in[22] defines , with , as the normalized time elapsed since the start of the segment. Defined , can be computed as . The coefficients are constant and only depend on the order of the B-spline (see Eq. (18-21) in[22]). The difference vector is . In[23], the cumulative B-spline formulation was derived for elements of Lie groups. In the Lie group , the addition corresponds to the matrix multiplication, but scaling by a scalar is not defined. The scaling operation requires to map an element, , of the group to a vector space (the Lie algebra), performing the scaling operation, and then remapping to the Lie group: . Elements of the Lie algebra can be mapped to the Lie group by the exponential map, Exp( ). The inverse operation is the logarithm map, Log( ). The cumulative B-spline formulation for is

where . We use two B-splines to represent the position, , and orientation, , of the trajectory we are interested in estimating. We use the notation , with , and the number of control nodes, to denote the continuous-time representation of a 6-DOF trajectory. Using one ( ) or two splines to represent a 6-DOF trajectory is equivalent, but the two splines solution is computationally less expensive[22, 12, 17]. We compute the time derivatives of the B-spline as proposed in[22], which reduces the number of matrix operations from to .

Iii-A1 Initialization

The first step of our continuous-time trajectory estimation pipeline is to fit a B-spline to the camera poses estimated by COLMAP: . The initial values of the control nodes, and , are obtained by linearly interpolating the COLMAP camera poses at the times , where is the time of the first camera pose and is the inverse of the control node frequency. Then, the control nodes are optimized to minimize the cost function

where is the number of errors. The measurements are obtained by the linear interpolation of the camera poses and , with . We similarly use spherical linear interpolation (SLERP) for the rotations . We minimize the cost function till convergence, which usually happens in less than 20 iterations. The result of this step is the continuous-time trajectory , that represents the camera poses in the reference frame . We transform the camera poses in body poses and obtain the trajectory , . The initial value of is obtained by using the calibration toolbox Kalibr[10].

The second step of our continuous-time trajectory estimation pipeline is to estimate the actual scale of the trajectory as well as to find a transformation that aligns it to the gravity aligned frame. When GPS measurements are available, we obtain an initial estimation of the 6-DOF transformation and scale using the method proposed in[25]. This method finds the least-squares solution that minimizes, with respect to and , the differences between , sampled from the spline, and the GPS measurements . Here we assumes that the GPS antenna-IMU positional offset, , is null. Then, , , , and , are estimated by minimizing the cost

where the -th GPS measurement, , is available at time . The predicted antenna position is sampled from the spline as: , , and .

In the case when we do not use GPS measurements, we leverage the IMU measurements to obtain an initial estimate of the scale. We integrate the IMU measurements for a short period of time, usually few seconds, to obtain a small trajectory segment. This trajectory is expressed in a gravity aligned frame, , which is estimated from the accelerometer measurements collected when the IMU is static. Similarly as before, we use[25] to obtain the transformation . This transformation is applied to transform to the frame .

Iii-A2 Full-batch optimization

We use and estimated in the initialization step to transform the trajectory to the global frame (or in the case when GPS is not used): . Similarly, the 3-D landmarks are also transformed to : . In the full-batch optimization, the state vector , is estimated by minimizing the cost function

Optimizing this cost function results in the maximum a posteriori (MAP) estimation of the state vector

, which is derived by using the probabilistic continuous SLAM formulation and the Gaussian distribution for all the measurements

[11]. The error is the visual residuals, which describes the re-projection error of the landmark . The camera pose in the world frame is obtained by sampling and inverting the position and orientation from the spline at : , . The set contains all the landmarks that project to the frame . The image feature measurements are obtained from COLMAP. The function denotes the camera projection model and is the timestamp of the image.

The value is the -th accelerometer residual with respect to the measurement . The quantity is the second derivative of the B-spline encoding the trajectory positions. The vector represents the gravity. We use cubic B-splines to represent accelerometer and gyroscope biases, and as in[10]. The -th gyroscope residual is . We refer the reader to Eq. (38) in[22] for the formula to derive . The errors and are residuals on the rate of the bias changes. The GPS errors are the same as the ones defined in Eq. (6). The matrices

are the weights of the residuals. Their entries are derived from the sensor noise characteristics. We assume Gaussian noise with standard deviation of 1 pixel for the 2-D image features.

Iii-B Discrete-time representation

In the discrete-time formulation, the trajectory is represented by the body poses at the rate of the camera: . The camera-IMU time offset is estimated using the method proposed in[20], which is integrated in VINS-Mono[19], a state-of-the-art visual-inertial odometry pipeline. This method proposes to shift the 2-D image features to account for the time offset between camera and IMU measurements. It makes the assumption that the camera motion has constant velocity in a short period of time (e.g., between consecutive frames), and, based on this assumption, it calculates the velocity of the 2-D features on the image plane. This velocity is then used to shift the feature position in the small period of time that corresponds to the camera-IMU time delay (see Eq. (4) in[20]). This allows to include the time offset in the estimation process. To define the GPS errors, the trajectory is interpolated at the time of the GPS measurements. The IMU-GPS time offset is taken into account in the interpolation factor similarly as in[14].

Iii-B1 Initialization

Similarly to Sec.III-A1, we compute the body poses from the camera poses estimated by COLMAP and then transform them to the world frame using the 6-DOF and scale transformation obtained by applying[25].

Iii-B2 Full-batch optimization

Using a similar probabilistic SLAM formulation as in Sec.III-A2, we derive the cost function to minimize

The state vector is . The set contains the velocity vectors: . The set

contains the accelerometer and gyroscope bias vectors:

and . The initial 3-D landmarks positions in are obtained similarly as described inIII-A2. The reprojection errors and the GPS errors are defined in the same way as in Sec.III-A2 with the only difference that trajectory poses are represented in discrete time. The errors are the inertial residuals. We compute these residuals using the IMU preintegration formulation proposed in[9]. Each inertial error constrains the consecutive and poses and velocities according to the preintegrated IMU measurements in (see Eq. (45) in[9]). The errors and constrain the bias random walk (see Eq. (48) in[9]).

Iv Experiments

We compare the continuous- and discrete-time representations in terms of accuracy of the estimated trajectory and time offsets. To this end, we use the metrics[27]:

-

Positional absolute trajectory error ( ) [m] : .

-

Rotational absolute trajectory error ( ) [deg] :

In the case of continuous time, the trajectory is sampled at the timestamp of the camera measurements to obtain and . We run multiple experiments in hardware-in-the-loop simulation and on two real-world datasets. The hardware-in-the-loop simulation allows a thorough evaluation of the capability of the two trajectory representations in estimating the time offsets since ground-truth values are known. We investigate if the type of robot, flying or ground robot, has effects on the trajectory representation using the two real-world datasets. We use the Ceres Solver[1] and choose the Levenberg-Marquardt algorithm for the optimization. Derivatives are computed using the automatic differentiation method included in the solver.

We ran all the experiments on Ubuntu 18.04 workstation with an Intel Core i7 3.2GHz Processor and used 8 cores for the optimization. In all the experiments, the optimization problem is solved until convergence. This is always achieved in less than 50 iterations.

Iv-a Hardware-in-the-Loop Simulation: EuRoC Dataset

The EuRoC dataset[4]

contains sequences recorded on-board a hex-rotor flying robot equipped with a stereo camera and an IMU. This dataset is well-known for accurate ground-truth and hardware synchronizated sensors. The camera-IMU time offset can be assumed to be zero. We only use the sequences labeled with V_, which contain 6-DOF ground-truth from a motion capture system. Sequences are classified (see Table 2 in

[4]) as easy, medium, or hard, reflecting the level of difficulty due to illumination conditions, scene texture and vehicle motion. Difficult sequences contain challenging illumination conditions, (e.g., motion blur), and fast motions with average linear and angular velocities up to 0.9 m and 0.75 rad , respectively.

We simulate GPS measurements by downsampling and corrupting the ground-truth positions with zero-mean Gaussian noise. The rate of the simulated GPS measurements is 10 Hz and the standard deviation of the Gaussian noise is m. The position offset is equal to . We only use images from the right camera.

Iv-A1 Ablation study on the B-spline

We conducted a study to evaluate the effects of the order and frequency of the control points of the B-spline on the trajectory and camera-IMU time offset estimates. The initial value of the time offset was set to 0 ms. The results of the ablation study on the order of the B-spline are in TableI. A B-spline of order 6, which results in a cubic polynomial encoding accelerations, is needed to correctly estimate the camera-IMU time offset. This conclusion agrees with the findings in[10]. An order higher than 6 does not have any effect on the estimation results. When the spline order is 6, the values of the time offset are close but not exactly 0 ms. Since we solve the optimization till convergence, the solution has reached a local minimum of the cost function. How far is the local minimum from the global minimum is unknown, and, in general, even the unknown global optimum of the MAP estimation can be different from the ground-truth due to modeling errors.

We set the order of the spline to 6 and performed the ablation study on the control node frequency. The results are in TableII. The values of ATE and suggest that a frequency of 10 Hz is the optimal choice. Increasing to 20 Hz does not give any significant advantages, while making the optimization computationally more expensive. When the frequency is high, e.g., 100 Hz, the convergence to a good solution is hard to achieve. We conclude that order 6 and control nodes frequency 10/20 Hz are appropriate parameters for B-spline representing trajectories in this dataset.

Iv-A2 Evaluation of the trajectory representation

In this set of experiments, we evaluated the continuous- and discrete-time trajectory representations in terms of , , accuracy in estimating the camera-IMU time offset, and computational cost. Following the findings of Sec.IV-A1, we used B-spline of order 6 and control node frequency of 10 Hz. To evaluate the accuracy in estimating the camera-IMU time offset, we simulated delays in the camera data stream, similarly to[20]. We ran experiments with delays of 0, 10, and 20 ms. The results of this comparison are in TableIII. These results suggest that when the camera and IMU are time-synchronized both trajectory representations produce similar accuracy (see the column corresponding to ). However, using continuous time gives a lower in the sequences V102, V103, V202, and V203, which are medium and difficult sequences containing fast motion of the robot.

When the camera and IMU are not time-synchronized, continuous time is the best trajectory representation. This representation can properly estimate the time offset and produces ATE similar to the case of time-synchronized sensors. In particular, the discrete-time representation suffers in estimating the camera-IMU time offset in fast trajectories. This can be seen from the large difference between the estimated and the true time offset in the sequences V102, V103, V202, and V203. The reason for this result is the assumption of camera motion with constant velocity in the period of time between consecutive camera frames, which is needed to compute the velocity of the 2-D features as described in Sec.III-B. For agile motion, this assumption is no longer accurate. TableIV contains the optimization time, which is the time that the solver takes to converge.

Iv-A3 Ablation study on the contribution of the different sensor modalities

In this section, we are interested in studying the contributions of each sensor modality, i.e., vision, inertia and GPS, to the accuracy of the trajectory estimation. To this end, we solved the optimization problems in Eq. (III-A2) and Eq. (III-B2) for the continuous- and discrete-time case respectively, with all possible combinations of at least two sensors. For the continuous-time representation, we used B-splines of order 6 with control node frequency of 10 Hz. The values of and for this analysis are in TableV and TableVI. The results show that vision is the most important sensor modality. For both trajectory representations, the vision-GPS configuration produces the lowest in most of the sequences. In this case, the COLMAP reconstructed trajectory is transformed to the gravity aligned frame by using noisy simulated GPS measurements and then re-optimized together with those measurements. COLMAP is able to reconstruct an accurate trajectory on every sequence of this dataset. For this reason, including the inertial measurements in the estimation process does not bring any significant benefit.

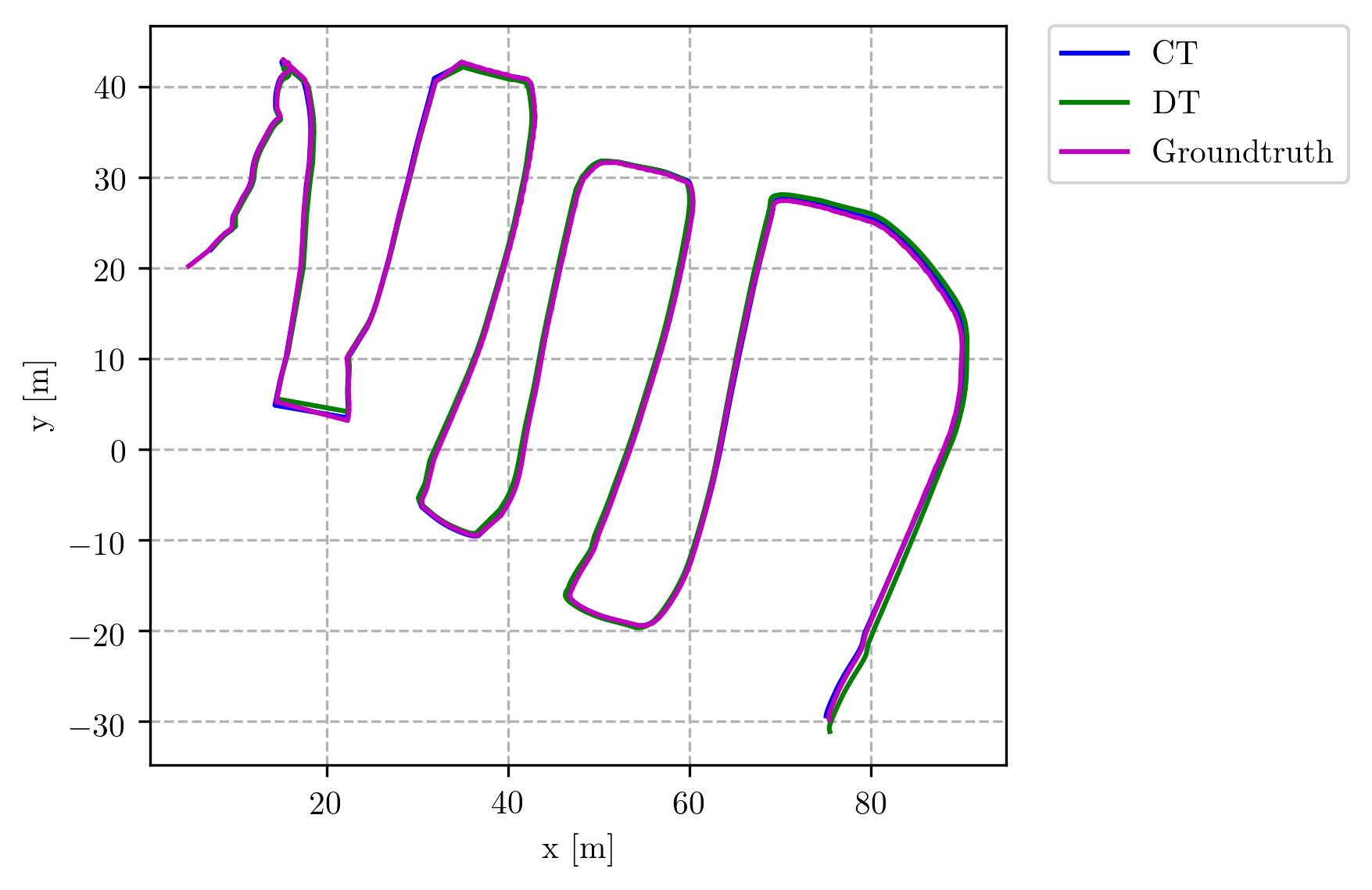

Iv-B Actual GPS with an outdoor flying robot

This dataset, courtesy of[24], contains outdoor flights of a flying robot equipped with a time-synchronized VI sensor (stereo camera and IMU), and a GPS receiver. GPS measurements are available at 5 Hz. We only use images from the left camera. The ground-truth position is provided by a Leica total station. Fig.3 shows the first trajectory of the dataset. The and the time offset estimates are in TableVII. For the continuous-time case, we used B-splines of order 6 and control node frequency of 10 Hz. As suggested by the results in Sec.IV-A, these values are suited for encoding flying robot trajectories. These results confirm the findings of Sec.IV-A: when the sensor are time-synchronized the two trajectory representations produce similar results, as shown by the similar values of and camera-IMU time offset.

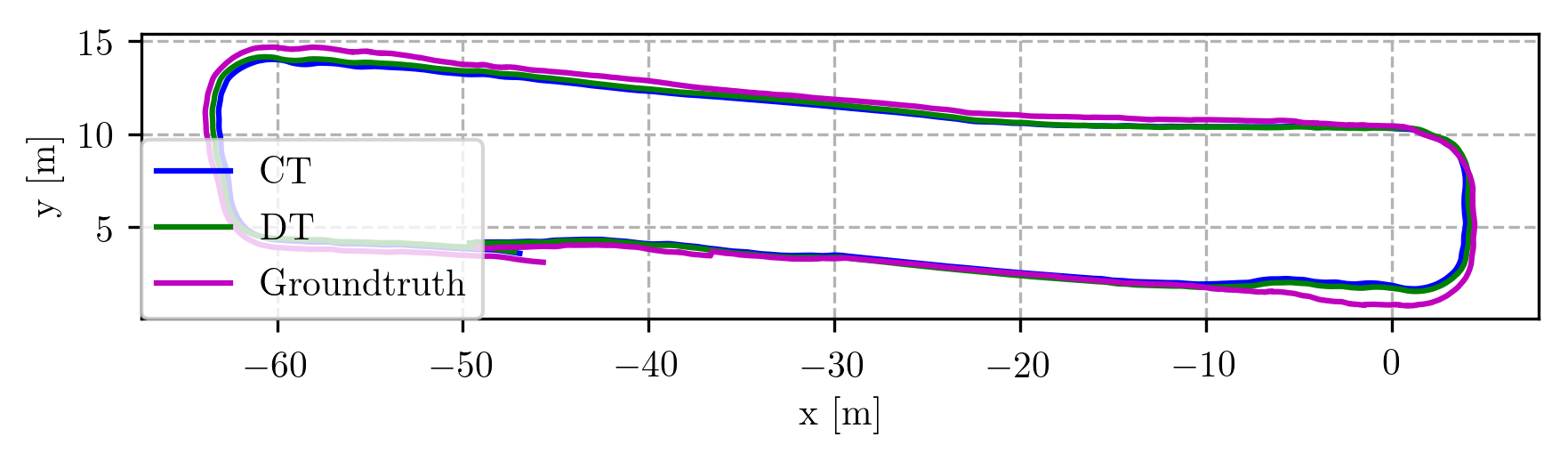

Iv-C Outdoor Trajectory: Ground robot

This experiment contains an evaluation of a trajectory recorded on-board a ground robot. The robot is equipped with a time-synchronized VI sensor (monocular camera and IMU) and a GPS antenna 1 1 1https://www.fixposition.com/ . The GPS measurements are available at 5 Hz. The 3-D position ground-truth is provided by a RTK-GPS system. Fig.4 shows the traveled trajectory of the robot. To study the influence of the B-spline order and control point frequency, we ran experiments with orders: 5, 6, and 7, and control node frequency: 10, 20, 100 Hz. Both continuous-time and discrete-time representations produce similar as reported in TableVIII. The estimated time offsets are similar for all the configurations listed in TableVIII.

In the continuous-time case, with B-spline of order 6 and control node frequency of 10 Hz, , and are -1.5 ms, and -26.0 ms, respectively. For the discrete-time case, they are -0.8 ms, and -36.3 ms. These results show that the findings of the experiments with a flying robot also apply to the case of a ground robot.

V Conclusions

The objective of this work is to compare continuous vs. discrete vision-based SLAM formulations to guide practitioners in the development of SLAM algorithms. We performed ablation and comparative studies in a hardware-in-the-loop simulation with full knowledge of the ground-truth. The ablation studies on the order and frequency of the control nodes of the B-splines suggest that it is necessary to use B-splines of order 6 and control node frequency of at least 10 Hz to accurately estimate the camera-IMU time offset. The comparative studies aimed at comparing continuous- and discrete-time trajectory representations with different levels of camera-IMU time delay. We find that when the camera and IMU are time-synchronized the two representations produce similar results. When a delay is present between the two measurement streams, the continuous-time representation is able to recover an accurate estimate of the time offset and consequently, produces lower ATE. In contrast, the discrete-time formulation fails in estimating the time offset, particularly when the robot moves fast, which consequently leads to high values of the ATE. The main reason of this result is that the assumption, which is necessary to estimate the camera-IMU time offset, of constant velocity of the camera motion in the period of time between consecutive camera frames does not always hold. The findings of the hardware-in-the-loop simulation agree with the results of the experiments on the real-world datasets containing data from aerial and ground robots. In addition, we evaluated the contribution of each sensor modality in an ablation study. We found that on the EuRoC dataset the most important sensor modality is vision. In most of the sequences, the lowest is obtained by aligning the camera trajectory obtained from COLMAP to a gravity aligned frame by using the noisy simulated GPS measurements. Including inertial measurements does not give any significant advantage.

Vi Acknowledgments

The authors would like to thank Torsten Sattler and Antonio Loquercio for the fruitful discussions, and the team at Fixposition for the ground robot dataset.

References

- [1] Ceres solver. Note: http://ceres-solver.org Cited by: §IV.

- [2] (2015) Batch nonlinear continuous-time trajectory estimation as exactly sparse gaussian process regression. Autonomous Robots 39 (3), pp. 221–238. Cited by: §II.

- [3] (2014) A hierarchical wavelet decomposition for continuous-time SLAM. In IEEE Int. Conf. Robot. Autom. (ICRA), Cited by: §II.

- [4] (2015) The EuRoC micro aerial vehicle datasets. Int. J. Robot. Research 35 (10), pp. 1157–1163. External Links: Document Cited by: §IV-A.

- [5] (2016) Past, present, and future of simultaneous localization and mapping: toward the robust-perception age. IEEE Trans. Robot. 32 (6), pp. 1309–1332. Cited by: §I, §I, §II.

- [6] (2001) A practical guide to splines. Springer Verlag New York. Cited by: §III-A.

- [7] (2019) An efficient b-spline-based kinodynamic replanning framework for quadrotors. IEEE Trans. Robot. 35 (6), pp. 1287–1306. Cited by: §I.

- [8] (2006) Simultaneous localization and mapping: part i. IEEE robotics & automation magazine 13 (2), pp. 99–110. Cited by: §I.

- [9] (2017) On-manifold preintegration for real-time visual-inertial odometry. IEEE Trans. Robot. 33 (1), pp. 1–21. External Links: Document Cited by: §I, §III-B2.

- [10] (2013) Unified temporal and spatial calibration for multi-sensor systems. In IEEE/RSJ Int. Conf. Intell. Robot. Syst. (IROS), External Links: Document Cited by: §I, §II, §III-A1, §III-A2, §IV-A1.

- [11] (2012) Continuous-time batch estimation using temporal basis functions. In IEEE Int. Conf. Robot. Autom. (ICRA), Cited by: §I, §II, §III-A2.

- [12] (2018) Survey of higher order rigid body motion interpolation methods for keyframe animation and continuous-time trajectory estimation. In 3D Vision (3DV), Cited by: §III-A.

- [13] (2020) On conceptualizing a framework for sensor fusion in continuous-time simultaneous localization and mapping. In 3D Vision (3DV), Cited by: §II.

- [14] (2020) Intermittent gps-aided vio: online initialization and calibration. In IEEE Int. Conf. Robot. Autom. (ICRA), Cited by: §II, §III-B.

- [15] (2018-12) Continuous-time visual-inertial odometry for event cameras. IEEE Trans. Robot. 34 (6), pp. 1425–1440. External Links: Document Cited by: §I, §II.

- [16] (2018) Spline error weighting for robust visual-inertial fusion. In IEEE Conf. Comput. Vis. Pattern Recog. (CVPR), Cited by: §I.

- [17] (2019) Trajectory representation and landmark projection for continuous-time structure from motion. Int. J. Robot. Research 38 (6), pp. 686–701. Cited by: §I, §II, §III-A.

- [18] (2000) General matrix representations for B-splines. The Visual Computer 16 (3–4), pp. 177–186. Cited by: §III-A.

- [19] (2018) VINS-Mono: a robust and versatile monocular visual-inertial state estimator. IEEE Trans. Robot. 34 (4), pp. 1004–1020. External Links: Document Cited by: §III-B.

- [20] (2018) Online temporal calibration for monocular visual-inertial systems. In IEEE/RSJ Int. Conf. Intell. Robot. Syst. (IROS), Cited by: §I, §II, §III-B, §IV-A2.

- [21] (2016) Structure-from-motion revisited. In IEEE Conf. Comput. Vis. Pattern Recog. (CVPR), Cited by: §III.

- [22] (2020) Efficient derivative computation for cumulative b-splines on lie groups. In IEEE Conf. Comput. Vis. Pattern Recog. (CVPR), Cited by: §II, §III-A2, §III-A.

- [23] (2016) Continuous-time estimation of attitude using b-splines on lie groups. Journal of Guidance, Control, and Dynamics 39 (2), pp. 242–261. Cited by: §III-A.

- [24] (2017) Robust Visual-Inertial Localization with Weak GPS Priors for Repetitive UAV Flights. In IEEE Int. Conf. Robot. Autom. (ICRA), Cited by: §IV-B.

- [25] (1991) Least-squares estimation of transformation parameters between two point patterns. IEEE Trans. Pattern Anal. Mach. Intell. 13 (4). Cited by: §III-A1, §III-A1, §III-B1.

- [26] (2021) Asynchronous multi-view slam. IEEE Int. Conf. Robot. Autom. (ICRA). Cited by: §I, §II.

- [27] (2018) A tutorial on quantitative trajectory evaluation for visual(-inertial) odometry. In IEEE/RSJ Int. Conf. Intell. Robot. Syst. (IROS), Cited by: §IV.

Source: https://deepai.org/publication/continuous-time-vs-discrete-time-vision-based-slam-a-comparative-study

0 Response to "Set point Regulation of Linear Continuous time Systems Using Neuromorphic Vision Sensors"

Post a Comment